- Roko's Basilisk

- Posts

- Reka’s Case For Owning AI

Reka’s Case For Owning AI

Plus: OpenAI exec exit, Kremlin targets messengers, and Apple home display slips.

Here’s what’s on our plate today:

🧪 Reka’s rent-versus-own AI model for serious enterprises.

🧠 Roko’s Brain Snack on owning, not renting, core models.

⚡ Quick Bits on OpenAI exit, Kremlin hacks, and Apple delay.

📊 Poll: your org’s long-term AI model strategy.

Let’s dive in. No floaties needed…

🌱 Framer for Startups

First impressions matter. Launch a stunning, production-ready site in hours with Framer, no dev team required. Early-stage startups get one year of Framer Pro free, a $360 value.

No code, no delays. Scale from MVP to full product with CMS, analytics, and AI localization. Trusted by hundreds of YC-backed founders.

*This is sponsored content

The Laboratory

How Reka AI is challenging the rent-forever model of enterprise AI

TL;DR

The meter never stops: Most enterprises access AI the way they once accessed software-as-a-service, paying per token, per call, per seat. That feels manageable in a pilot but becomes a structural cost dependency as AI moves into core operations.

Reka’s different bet: Reka AI, alongside Cohere and Mistral, is building AI designed to live inside an organization’s own infrastructure rather than on someone else’s servers.

The economics are real, with caveats: Self-hosting can be up to 18 times cheaper than frontier APIs at scale, and for regulated industries like banking and defense, keeping data in-house is less a preference and more a legal reality.

The farmer’s lesson: The John Deere story is the right frame here. Enterprises that don’t ask hard questions now about model ownership, proprietary weights, and vendor lock-in risk will wake up in the middle of their own harvest, locked out of the machine they thought they owned.

An important but often overlooked aspect of technological advancement is that, as systems become more complex, it becomes increasingly difficult for users to understand why and how to use them effectively. A very visible example of this was seen when, after generations of farmers owning and fixing tractors themselves, they had to turn to the manufacturer for regular maintenance.

Machines that were once simple to fix when shipped with proprietary software could only be fixed after running a password-protected diagnostic system, which often meant a call to an authorized dealer, sometimes in the middle of harvest.

The story of the struggle between farmers and Deere & Company is a classic tale about modern technology and what happens when products become software platforms, how ownership can quietly give way to dependence.

With artificial intelligence, something similar is playing out as the industry evolves. Many scrambling to adopt AI to help with cognitive tasks often overlook the important decision of whether to rent or own it.

So far, the predominant approach for many has been to access frontier capabilities via metered APIs, paying per token, per call, or per seat. It feels lightweight at first, with no infrastructure to manage and no models to maintain. However, over time, usage accumulates as workflows begin to depend on systems that live elsewhere. Costs scale with reliance, and what looks like a flexible tool can start to resemble a long-term dependency baked into the organization’s operating core.

However, now some companies are proposing a different approach, one that could become the main alternative to renting AI models from hyperscalers.

The embedded alternative

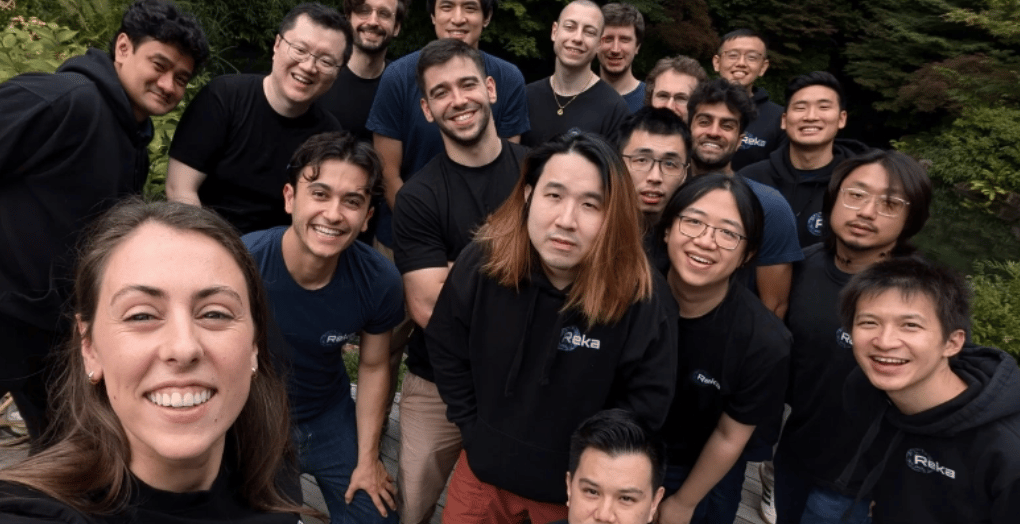

Although not many people outside enterprise technology may have heard of it, Reka AI is one such company building an alternative business model in the AI landscape.

The company has fewer than 50 employees, has raised $168M in total funding, and produces no consumer-facing products. What it does produce is a suite of AI models, Spark, Edge, Flash, and Core, explicitly tiered for different deployment contexts, from edge devices to full enterprise infrastructure. Its Flash model, with 21B parameters, can be quantized to 3.5 bits and run on a device. Core supports deployment in on-premise environments, private clouds, virtual private clouds, and fully air-gapped installations where no data ever touches the public internet.

The company’s proposition for enterprise AI adopters is not to rent intelligence by the token, but to own the capability, deploy it within existing infrastructure, integrate it with specialized data, and avoid per-inference costs.

The model lives inside enterprises’ walls, and the cost of running it is theirs to control.

Reka AI does this by embedding its models into Snowflake’s Cortex platform, distributing them through Carahsoft to public-sector buyers, and powering enterprise tools such as Turing Video’s security platform.

In March 2025, it launched Nexus, a system for building internal AI workers that handle workflows including sales and recruitment, reinforcing its broader proposition that organizations should deploy AI capabilities within their own infrastructure rather than depend on metered external access.

And Reka AI is not the only company taking this approach. Cohere also enables enterprises to run models in private environments and frames its philosophy around bringing AI to the data rather than sending data outward. Mistral AI has gone further by releasing models under open licenses that allow companies to own, modify, and operate them independently. What links these companies is not architecture or benchmark scores but a shared view of the enterprise relationship, in which AI becomes an embedded internal asset rather than a permanently rented external service.

When ownership gets cheaper than renting

What makes this approach appealing to enterprise clients is not just their control over usage, but also the economics, which suggest that self-hosted AI is more compelling than conventional wisdom would have it, at least when done at scale.

A 2026 total cost of ownership analysis commissioned by Lenovo found that running AI models on a company’s own hardware can be dramatically cheaper than relying on the cloud. That self-hosting delivered roughly 8 times lower costs per M tokens than cloud infrastructure and up to 18 times lower costs than frontier API services. In high-usage environments, the upfront hardware investment paid for itself in less than four months. Over a typical five-year server lifecycle, savings could exceed $5M per machine. The takeaway was blunt: cloud-first is no longer the default answer for every AI workload.

However, for a significant segment of the enterprise market, the calculation is not primarily about cost; it is about control.

Banks, hospitals, defense firms, and government agencies operate under strict rules about where data can go and who can see it. Sending sensitive records to an external API, even a secure one, creates compliance burdens and risk. At the same time, running models on-premises or in isolated, private environments removes that architectural-level exposure because the data never leaves the organization.

This approach is reflected in how AI is being adopted by companies like Reka AI, which works with Carahsoft to reach the U.S. government buyers through established procurement channels.

Similarly, Cohere has partnered with the Royal Bank of Canada to run AI systems within the bank’s own infrastructure, and Mistral AI is collaborating with Singapore’s Home Team Science and Technology Agency and with Stellantis on deployments that operate without constant connectivity. These are embedded systems, not pay-per-call APIs.

Taken together, the cost advantages and regulatory realities suggest a familiar split emerging in AI. Some workloads make sense to rent, while others make more sense to own. Just as cloud computing led to hybrid infrastructure rather than a total migration, AI may follow the same path, with organizations choosing, case by case, where control and economics matter most.

However, despite the economic and data-sovereignty angles, companies like Reka AI face an uphill battle that will ultimately determine their long-term impact on the industry.

The obstacles ahead

Of these, the most important factor is performance. The case for smaller, self-hosted models only works if they are good enough for serious business use. Reka AI, Cohere, and Mistral AI all say they can handle demanding enterprise tasks; the risk is not that larger players will match them, but that the frontier will leap forward again. If the next model from OpenAI or Google creates a meaningful capability gap, what is good enough now may quickly fall short for high-value tasks.

Then there is the question of operational burden. Running models in-house requires infrastructure, upgrades, security patching, and skilled teams. Those personnel costs can reach hundreds of thousands of dollars a year, which weakens the simple argument that self-hosting is always cheaper. APIs push that responsibility to the vendor.

Finally, there is the question of ownership. While some companies operating in the smaller-model space have made their model weights public, others have kept them proprietary. This brings them closer to the business model of a traditional enterprise license than outright ownership, and the long-term implications depend on commercial terms that are not public.

Fit over frontier

Despite the challenges, companies like Reka AI, Cohere, and Mistral stand out not by claiming to be the smartest model in the room, but by being the ones that fit. They argue that AI should adapt to an organization’s infrastructure, data, and constraints, not the other way around. As AI shifts from experiment to core operations, that distinction will matter more.

The story of the struggle between farmers and Deer & Company can be seen as both a cautionary tale about how smaller operations suffer when they fail to understand the limits of their ownership rights and a lesson for enterprises scrambling to deploy AI workflows. In this space, the role of companies like Reka AI becomes even more important, as they provide an alternative to a business model in which enterprises must answer the question, ‘Whether owning nothing actually makes them happier?’

Brain Snack (for Builders)

| 💡Design every high-volume workflow so you can swap Frontier APIs for self-hosted models later. |

Outperform the competition.

Business is hard. And sometimes you don’t really have the necessary tools to be great in your job. Well, Open Source CEO is here to change that.

Tools & resources, ranging from playbooks, databases, courses, and more.

Deep dives on famous visionary leaders.

Interviews with entrepreneurs and playbook breakdowns.

Are you ready to see what’s all about?

*This is sponsored content

Quick Bits, No Fluff

OpenAI exec walks away: Caitlin Kalinowski leaves OpenAI over the Pentagon deal, protesting the shift from ethics talk to battlefield AI.

Russian spies target chats: Dutch intelligence says Kremlin hackers hijack Signal and WhatsApp accounts using fake support messages, QR tricks, and linked devices.

Apple Home Display delayed: Rumors say Apple’s AI smart home screen slips to a fall launch, tied to a bigger Siri upgrade in iOS 27.

Meme of the Day

Wednesday Poll

🗳️ For your org, what feels like the right long term AI model strategy? |

The Toolkit

AssemblyAI: API-first platform for speech recognition and audio intelligence. Try it to auto-transcribe calls and pull out topics, sentiment, and speakers from long-form audio, so your team is not stuck taking notes.

Chroma: Open source vector database built for AI apps. Use it to store and query embeddings so your agents or RAG systems can actually remember context instead of re-reading everything on every call.

Continue: Free, open source AI copilot that runs inside VS Code and JetBrains. Use it to get inline code suggestions, refactoring, and local RAG in your own repos without handing everything off to a cloud IDE.

Rate This Edition

What did you think of today's email? |