- Roko's Basilisk

- Posts

- The Startup Hiding AI Infrastructure

The Startup Hiding AI Infrastructure

Plus: Desert alien delusions, Play Store cuts, and human first AI.

Here’s what’s on our plate today:

🤖 How Modal turns messy AI infrastructure into serverless seconds.

📰 Headlines: Meta glasses spiral, Play fees cut, pro human pledge.

🧰 Toolkit: Mirage, Speechmatics, Superhuman for visuals, speech, and email.

🧪 Weekend lab: test Speechmatics and Superhuman in focused sprints.

Let’s dive in. No floaties needed…

🌱 Framer for Startups

First impressions matter. Launch a stunning, production-ready site in hours with Framer, no dev team required.

Early-stage startups get one year of Framer Pro free, a $360 value.

No code, no delays. Scale from MVP to full product with CMS, analytics, and AI localization.

*This is sponsored content

The Laboratory

Inside Modal AI’s bet on making cloud infrastructure disappear

TL;DR

Modal lets developers run AI workloads in the cloud without touching servers, containers, or raw GPU setups.

It built its own Rust-based stack so code can spin up on GPUs in under a second and scale to thousands of machines.

Customers pay only for compute time used, turning AI infra from ‘reserve GPUs and pray’ into true usage-based tooling.

The tradeoff: massive convenience and speed in exchange for deeper dependence on a single abstraction layer for critical AI workloads.

The business of running a business is often a negotiation and a struggle between managing costs and revenue. On the one hand, business owners work to reduce costs; on the other hand, they are always on the lookout to maximize profits by fine-tuning processes, optimizing workflows, and predicting future expenditures. In short, running a successful business can be equated to walking a tightrope across a deep gorge.

In this delicate balance, technology has historically played the crucial role of a balancing pole. Using it wisely can make the journey easier, while misusing it can lead to catastrophic failure. In this context, artificial intelligence is marketed as a sure-shot way for businesses to reduce costs and maximize profits. However, this is often an oversimplification of the complex process of adopting a new technology.

To understand how AI fits into a business model, one should view it the same way they would a vehicle. While a vehicle can often help a business reduce transportation costs, its input costs cannot be judged by the sticker price alone. The purchase should not be treated as a one-time expense but as the beginning of a long financial commitment.

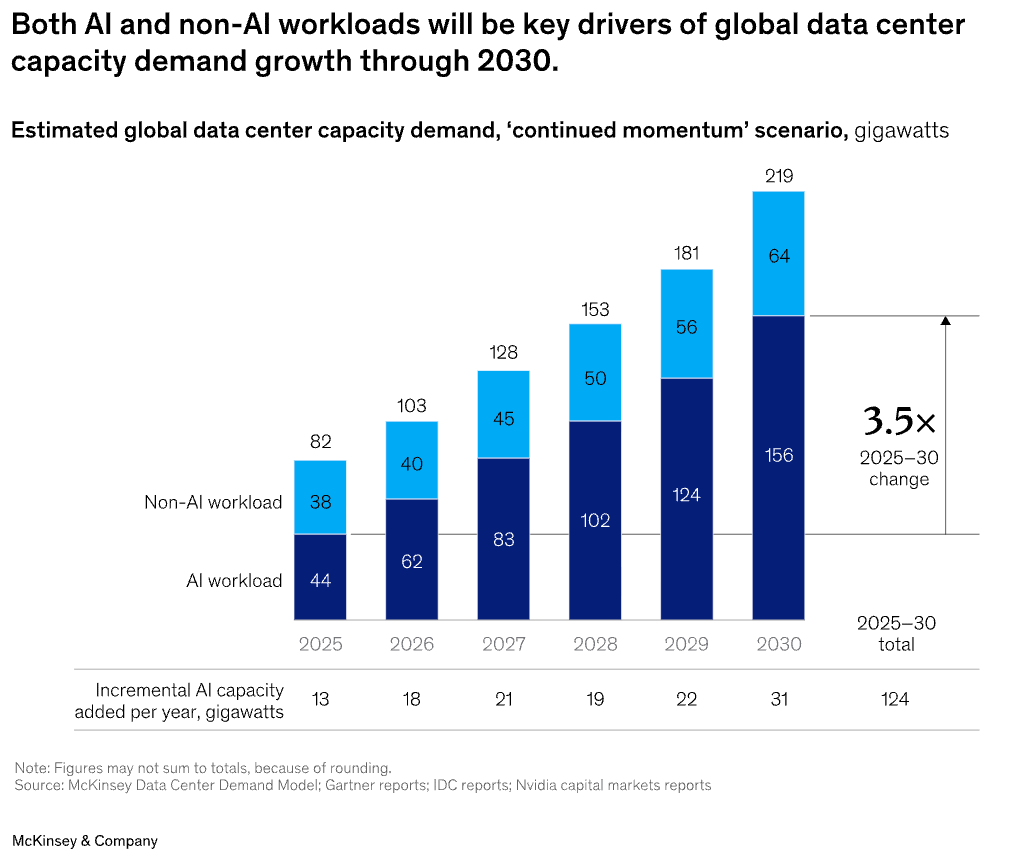

Similarly, when businesses consider using AI, they must factor in the cost of infrastructure, including GPU configurations, orchestration layers, monitoring systems, failure handling, and the ongoing engineering effort required to keep everything running reliably at scale.

Often, this is easier said than done, and it is in this space that platforms like Modal become consequential.

What Modal actually does

Modal Labs is a New York-based AI infrastructure startup that has built a serverless platform allowing developers to run AI workloads in the cloud without managing servers, containers, or GPU allocations. Instead of wrestling with configuration files, Kubernetes clusters, and hardware provisioning, developers write a few lines of Python code, and Modal handles the rest: spinning up GPU-powered containers in under a second, scaling to thousands of machines on demand, and scaling back to zero when the work is done. Users pay only for the compute time they actually consume.

Founded in 2021 by Erik Bernhardsson, who previously built the music recommendation engine at Spotify and served as CTO of Better.com, and Akshat Bubna (CTO), Modal has grown rapidly in a market defined by surging demand for AI compute and chronic GPU underutilization. The company reached unicorn status in September 2025 after raising $87M in a Series B led by Lux Capital at a $1.1B valuation. As of February 2026, TechCrunch reported that Modal was in talks to raise new funding at a $2.5B valuation, with General Catalyst expected to lead the round.

The growth of Modal can be understood by examining how it bridges the gap between companies investing enormous sums in AI infrastructure and developers who continue to struggle with its complexity. At its core, the idea is simple: cloud computing should feel less like managing servers and more like using electricity. This invisible resource is simply available when needed.

Built from scratch, on purpose

The easiest way to understand what Modal Labs does is to look at its architecture as a moat. Unlike competitors that build on top of Docker and Kubernetes, Modal built its entire stack from scratch in Rust, a custom container runtime, a scheduler, and a lazy-loading filesystem (built with FUSE) that fetches only the files a container needs, when it needs them.

Modal’s storage system is designed to avoid the inefficiency of moving entire container images back and forth. Instead of transferring large files each time, it only loads what is necessary, allowing containers to start in under a second, which on many traditional platforms can take much longer.

For developers, the workflow is intentionally simple. They annotate a Python function, select the required GPU, and deploy, eliminating the need to manage Dockerfiles, YAML configurations, or Kubernetes manifests.

Behind the scenes, Modal runs on multiple cloud providers, including Oracle Cloud Infrastructure and AWS. This saves developers the hassle of choosing a provider, as the platform automatically draws from a global pool of GPUs, managing capacity and scaling so workloads can expand to thousands of GPUs or scale back down when demand falls.

Why paying by the second changes the math

The importance of Modal stems not just from how it helps reduce developers’ burden, but also from how it helps businesses manage the cost of AI deployment without the backing of hyperscalers.

Modal uses a pay-as-you-go pricing model, charging only for the computing time customers actually use. Costs are measured in seconds, so lower-power GPUs are cheaper, while high-end chips like the H100 are more expensive. Crucially, customers pay nothing when their systems are idle, removing the need for startups and mid-size companies to commit to costly long-term GPU rentals.

The practical benefits of this approach are significant. They can be understood by looking at Suno, an AI music company, which said that using Modal saved months of development work because it did not need to build a dedicated infrastructure team.

Beyond startups, the company also plays an important role in Scale AI’s operations by providing compute infrastructure during sudden surges in demand.

For businesses, the appeal is largely about time and focus. Engineers can move from writing code to running it in a live environment very quickly, rather than spending weeks setting up and maintaining complex systems. In a field where technical teams often lose many hours each week to infrastructure tasks, reducing that overhead can mean faster product development and a clearer competitive edge.

However, Modal is not alone in this pursuit. Companies like Baseten, Fireworks AI, and Replicate are chasing the same opportunity in serverless AI inference. At the same time, neoclouds such as CoreWeave and Lambda Labs compete at the raw-GPU-rental layer below.

What distinguishes Modal is that it operates between these two tiers: abstracting GPU capacity from multiple cloud providers while wrapping it in a developer experience that its competitors have struggled to match.

However, much like the rest of the AI landscape, the scope and impact of Modal are undergoing rapid shifts due to evolving ethical and policy outlooks.

The risks hiding behind the abstraction

As more AI businesses rely on serverless compute platforms, the risk of dependencies grows. A platform-level outage can disrupt many applications at once, while the abstraction that simplifies development can also obscure where data is processed and stored. This lack of visibility can complicate data sovereignty, compliance, and vendor risk assessments, particularly for organizations in tightly regulated sectors.

Then there is the environmental paradox. By turning GPUs on only when needed, platforms like Modal can improve hardware utilization and potentially reduce waste compared to always-on infrastructure. Yet the same ease of access may drive higher overall compute usage, as lower friction often encourages greater consumption.

These concerns intersect with a rapidly shifting policy landscape. Privacy and data residency regulations, along with emerging debates over compute sovereignty, sit uneasily with the borderless execution of cloud services. If workloads can run anywhere in the world without users knowing where they are, companies in industries such as healthcare, finance, or defense may face legal and operational uncertainty. At the same time, as governments increasingly view AI compute capacity as strategic infrastructure, platforms that mediate access to that capacity may attract closer regulatory scrutiny.

Seen from a distance, Modal’s rise is reflective of two important developments in the AI landscape. One is companies' willingness to adopt AI in their workflows while managing build and maintenance costs, and the second is the need to simplify access to complex AI infrastructure.

While hyperscalers can afford to build and maintain AI infrastructure, platforms like Modal are becoming the safety net that helps small and medium-sized businesses balance the tightrope of profit and loss, which is under urgent threat from rapidly evolving technologies like AI.

For years, working in the cloud meant managing layers of infrastructure, moving from physical servers to virtual machines, then to containers, and finally to orchestration systems. Each wave made things easier, yet also introduced new forms of complexity. Modal pushes this progression further by aiming to hide the machinery entirely. Developers specify the task they want performed, while the platform decides how and where it runs.

The quiet leverage of infrastructure

While AI is often described as the engine of future business growth, platforms like Modal are quietly shaping how that engine is actually built, operated, and scaled. In doing so, they may prove just as consequential as the models that command the spotlight.

Friday Poll

🗳️ How are you handling AI infrastructure in your own stack right now? |

Outperform the competition.

Business is hard. And sometimes you don’t really have the necessary tools to be great in your job. Well, Open Source CEO is here to change that.

Tools & resources, ranging from playbooks, databases, courses, and more.

Deep dives on famous visionary leaders.

Interviews with entrepreneurs and playbook breakdowns.

Are you ready to see what’s all about?

*This is sponsored content

Headlines You Actually Need

Meta glasses meltdown: Futurism reports a Meta AI smart glasses user spiraled into delusions and desert alien hunts, spotlighting ‘AI psychosis’ and weak safety guardrails.

Google cuts Play fees: Google settles with Epic and drops standard global Play Store commissions to 20%, reshaping margins and power dynamics for mobile app developers.

Pro-human AI manifesto: Leading researchers and groups back a ‘Pro-Human AI Declaration’ urging AI firms to keep humans in charge and reject automation that sidelines human agency.

Weekend To-Do

The Toolkit

Mirage: Browser-based 3D design app that lets you prototype interactive product shots and visuals directly in your editor.

Speechmatics: Speech recognition engine for real-time, multilingual transcription and voice analytics across audio and video.

Superhuman: Rebranded Grammarly platform offering an AI assistant that drafts, edits, and manages communication across email and work apps.

Rate This Edition

What did you think of today's email? |