- Roko's Basilisk

- Posts

- The Hidden AI Tax

The Hidden AI Tax

Plus: Parag's AI return, OpenAI revenue miss, and UK leans on US tech.

Here’s what’s on our plate today:

🧪 Why tokens are becoming the hidden cost of AI.

📰 Parag's $2B comeback, OpenAI's IPO wobble, and the UK's AI dependence.

🛠️ Three tools worth trying: Tiktokenizer, LiteLLM, LLMLingua.

🗳️ Poll: where to cut as tokens eat AI budgets?

Let’s dive in. No floaties needed…

How Jennifer Aniston’s LolaVie brand grew sales 40% with CTV ads

The DTC beauty category is crowded. To break through, Jennifer Aniston’s brand LolaVie, worked with Roku Ads Manager to easily set up, test, and optimize CTV ad creatives. The campaign helped drive a big lift in sales and customer growth, helping LolaVie break through in the crowded beauty category.

*This is sponsored content

The Laboratory

TL;DR

Inference is the real cost center: Training is a one-time expense, but inference is ongoing. Deloitte reports that inference now accounts for two-thirds of all AI compute, and a $200-a-month project during development can balloon to $10k a month once it reaches production scale.

Reasoning models multiply the bill quietly: Chain-of-thought models generate thousands of internal tokens that never appear in output but are still billed, inflating costs two to five times and consuming up to 80% of token budgets in some configurations.

Non-English languages pay a structural tax: Tokenizers optimized for English fragment languages like Arabic and Hindi incur processing costs far higher than English, with some scripts running over 12 times as expensive.

The real moat is operational: Organizations cutting costs 40–60% are doing it through prompt engineering, context compression, and model routing, not by chasing bigger models.

Why tokens are becoming the hidden cost of AI

Since the mid-20th century, the global economy has been shifting steadily toward knowledge as its primary unit of value, a transition that shows up in everything from consulting fees to software licensing to the way enterprises now budget for artificial intelligence. This shift has made the anecdote about the mechanic who charged $10k to fix a dead engine, $1 for the tap, and $9,999 for knowing where to tap a staple of business presentations for decades.

However, while this parable continues to resonate with its emphasis on knowledge as a generator of value, in 2026, it has acquired a new dimension. Today, the ‘tap’ is increasingly being delivered by an AI model, and the bill arrives not in labor hours but in tokens.

For enterprises adopting AI workflows, the lack of clarity about how this knowledge is priced extends beyond delayed projects. It inflates operational costs, which only become visible as production scales, and has the potential to derail operational budgets and hurt profit margins. The biggest culprit here is a lack of understanding of the real cost of running AI models and the shifting pricing strategies introduced by AI labs looking to generate returns for their investors.

To understand how AI pricing actually works, one needs to take a closer look at how AI breaks down information into manageable pieces and what it costs to deliver those pieces as AI output.

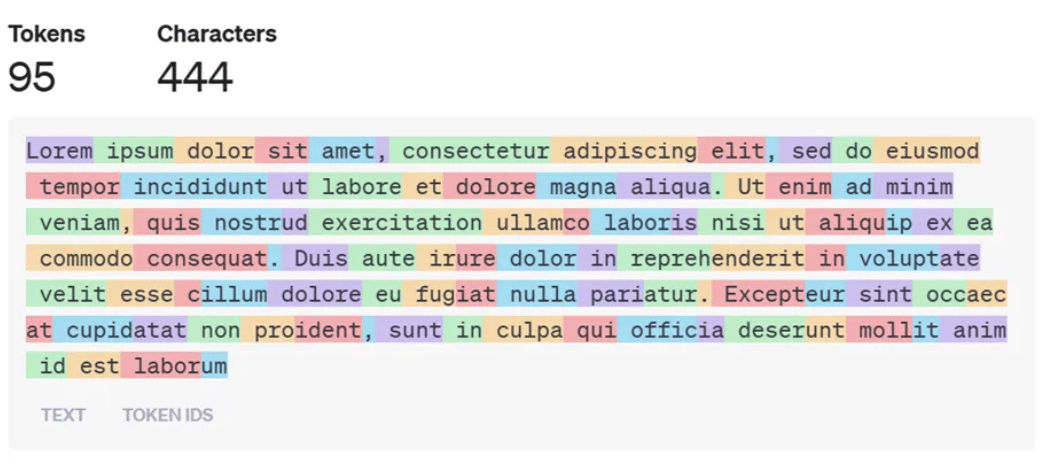

When a person reads the sentence “I am transforming business,” the meaning is immediate and intuitive. However, a large language model cannot directly understand words the way humans do, so the first step is to convert language into numbers via a process called tokenization. The sentence is broken into smaller units called tokens, which may be whole words, word fragments, or punctuation marks, and each token is assigned a numerical ID from the model’s vocabulary.

Instead of reading language as meaning, the model processes these numerical representations and learns patterns in how they appear together across vast amounts of text. Most modern systems rely on subword tokenization, which means words are often split into reusable parts, such as “transform” and “ing,” rather than stored as complete units. This approach allows models to handle unfamiliar words by recognizing known fragments and estimating meaning from context. In effect, humans see language as ideas expressed through words, while AI systems see it as patterns expressed through numbers.

Methods such as Byte Pair Encoding and WordPiece are popular because they keep the model’s vocabulary small enough to manage while still allowing it to understand many different words. What looks like a small technical step, however, has major economic consequences, something many enterprise AI strategies still fail to recognize.

Why cheap pilots become expensive products

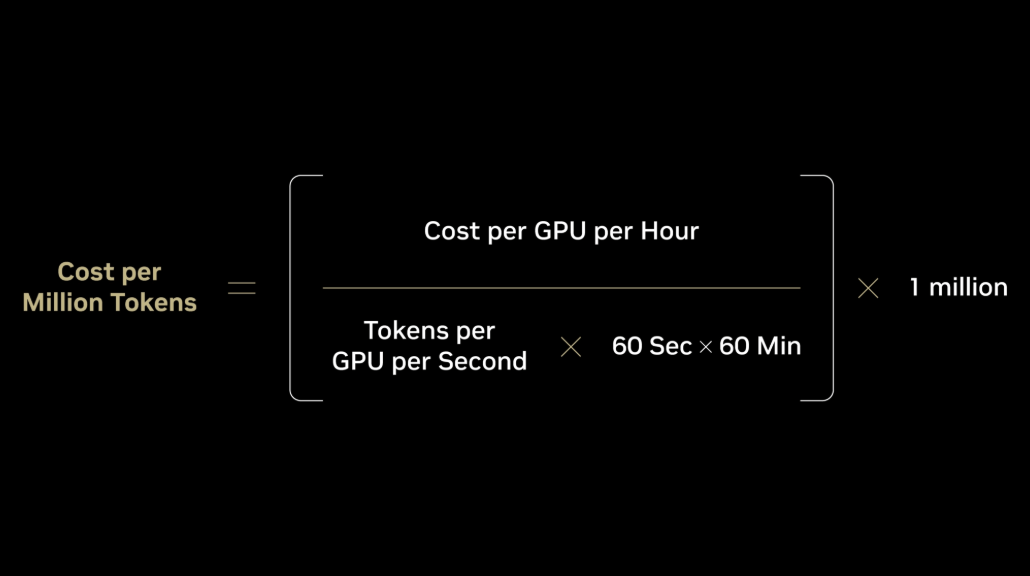

As enterprises move through 2026, they are encountering what some analysts have begun calling the “Inference Wall.” It stems from the knowledge that while training a model is a one-time capital expenditure, inference, the act of a model processing tokens in response to a user, is a continuous operational expense.

And as enterprises continue to invest in automation, Deloitte reports that inference workloads now account for roughly two-thirds of all AI compute, up from a third in 2023 and half in 2025. Additional industry data suggests these workloads consume over 55% of AI-optimized infrastructure spending, with projections pointing toward 70–80% by year’s end.

Many companies discovered the problem only after launch: the cheap pilot that looked brilliant in a board meeting became a five-figure monthly liability in production.

A recent analysis of chain-of-thought prompting economics illustrated a pattern familiar to many enterprises: a project that costs $200 a month during development can balloon to $10k a month once it reaches production scale. The difference is not just user volume; it is token bloat, where every query, every system prompt, and every internal reasoning step adds to the bill.

At scale, the gap between an efficient and an inefficient tokenization strategy translates directly to the bottom line. Research from Predli, which modeled a multilingual support assistant handling roughly 864k requests per month, found that the difference could represent a nearly $300k monthly saving for large-scale deployments. That figure makes tokenization less of an academic concern and more of a line item for quarterly reviews.

To make matters more complicated, the arrival of reasoning models has further altered the token landscape. Traditional models generated tokens roughly linearly: one token of internal processing produced approximately one token of visible output.

However, the latest generation of models performs what researchers call internal reasoning (chain-of-thought processing) before a single word reaches the user. This internal monologue can generate thousands of tokens that never appear in the user interface but are still billed to the enterprise.

According to OpenAI’s own documentation, reasoning tokens occupy space in the model’s context window and are billed as output tokens, even though they remain invisible to the end user.

Research on chain-of-thought prompting has shown that it inflates token costs by two to five times compared to direct responses and adds significant latency. For businesses, this means that even if a vendor drops its price per-million tokens by 90%, the actual cost of a query may still rise if the model’s thinking effort is not managed.

Decisions that were once simple, like whether to use a high or low reasoning-effort setting, now carry direct profit-and-loss implications. In some configurations, internal reasoning alone can consume up to 80% of an allocated token budget.

The language tax of global AI

The cost problem deepens when enterprises operate across languages because most tokenizers were optimized for English and are highly efficient at encoding English text, but struggle with the morphological complexity of languages such as Arabic, Hindi, and Japanese.

A peer-reviewed study published at EMNLP examining tokenization bias across 16 African languages confirmed that higher token fertility (the number of tokens a model needs per word) reliably predicts lower accuracy and higher costs.

The economic implications are stark: doubling fertility results in roughly four times the training cost and inference latency. A separate analysis by Predli found that identical semantic content in Arabic can cost over 340% more to process than in English because the tokenizer fragments Arabic words far more aggressively.

The findings were further strengthened by a widely cited research paper on tokenizer fairness across 22 languages, which found that, for some scripts, processing costs are more than 12 times higher than in English.

This structural inefficiency forces companies operating in non-English markets into an uncomfortable choice: pay more for the same intelligence, or work within a narrower context window that degrades accuracy. As one researcher put it, tokenization is the tax that low-resource languages cannot afford to pay, and speakers are charged for every token at every layer for every variant they write.

For enterprises expanding their AI footprints globally, this can become a structural barrier to equitable and cost-effective digital transformation, making language-balanced tokenizers a core procurement requirement rather than a nice-to-have.

Why smart companies are optimizing inputs, not just models

To address these rising, often unexpected costs, forward-thinking organizations are moving beyond off-the-shelf tokenization from providers such as OpenAI, Anthropic, and Google. Instead, they are implementing custom preprocessing layers that function as intelligent compression. By replacing recurring enterprise-specific jargon or complex data structures with single placeholders before the text ever reaches the model, companies can reduce total token usage by 30–50% without visible loss in output quality.

The toolkit extends beyond custom tokenizers to include prompt engineering, once dismissed as a trivial exercise in phrasing but now emerging as a legitimate discipline for managing AI costs.

Techniques like context compression, which reduces the number of tokens fed into a prompt without destroying the information needed for an accurate response, and model routing, which directs simple queries to cheaper, smaller models and reserves expensive reasoning models for genuinely complex tasks, can cut costs by 40–60% while maintaining quality.

At the same time, the shift toward multimodal tokenization is beginning to unify how models process the world. By converting audio, images, and video into shared token representations, developers can build agents that reason across data types more efficiently. This evolution suggests a future in which tokenization is not just a text-cleaning step but a sophisticated management layer for what some are already calling Intelligence-as-a-Service.

The real moat is not bigger models

For the modern enterprise, the competitive imperative is shifting from raw model capability to operational efficiency. The organizations winning the AI race in 2026 are not necessarily those with access to the largest models, but those with the cleanest data pipelines and the most disciplined tokenization strategies.

As language continues to act as the primary interface for software, the ability to turn it into math cheaply, accurately, and fairly is becoming the defining operational moat. This means investing in token-aware workflow design: auditing system prompts for unnecessary verbosity, routing queries to appropriately sized models, compressing context before it reaches the inference layer, and selecting tokenizers that do not penalize the languages customers use.

As the global economy continues its shift toward knowledge-intensive work, questions about token economics will only become more pointed. Whether enterprises choose to build internal expertise to answer those questions or seek specialists to streamline their AI workflows may well determine which organizations absorb the coming costs gracefully and which discover them on their invoices.

Quick Bits, No Fluff

• Parag's $2B comeback: Ex-Twitter CEO Parag Agrawal's AI startup is raising new funding at a $2B valuation, marking a quiet but significant return to the spotlight.

• OpenAI's IPO wobble: OpenAI missed key revenue and user targets in its sprint toward a public listing, raising fresh questions about its trillion-dollar narrative.

• UK's AI dependence: A new opinion piece argues the UK's AI strategy has left it dangerously dependent on US technology and exposed to Trump-era policy swings.

Launch fast. Design beautifully. Build your company's website on Framer

Framer helps teams design, build, and launch their marketing sites lightning fast.

With the ability to publish hundreds of CMS pages in a single click, operate at a global scale with seamless localization, and even host unified content across multiple domains, teams have never been able to ship faster.

Trusted by companies like Miro, Bilt, and Perplexity.

*This is sponsored content

Thursday Poll

🗳️ Tokens are eating AI budgets. Where's the smartest place to cut? |

3 Things Worth Trying

• Tiktokenizer: Browser tool that shows exactly how OpenAI, Anthropic, and other models tokenize your prompts, useful for spotting hidden token bloat before it hits production.

• LiteLLM: Open-source proxy that routes prompts across 100+ models with a single API, perfect for cost-aware model routing without rewriting your stack.

• LLMLingua: Microsoft's prompt compression tool that shrinks long prompts by up to 20x while preserving accuracy, built for teams trying to tame chain-of-thought costs.

The Toolkit

• Together AI: Cloud platform for running and fine-tuning open-source AI models at scale.

• VEED: Browser-based video editor with AI subtitles, voice cloning, and one-click translation.

• Writer: Enterprise AI writing platform with custom models trained on your brand voice.

Rate This Edition

What did you think of today's email? |