- Roko's Basilisk

- Posts

- The AI Chip War Just Split In Two

The AI Chip War Just Split In Two

Plus: Monarch's implosion, Arcee's rise, and Anthropic's most powerful secret.

Here’s what’s on our plate today:

🧪 DeepSeek ditches NVIDIA for Huawei, and what it means for the future of AI.

🗞️ Tractor flop, open source underdog, Anthropic's too-dangerous model.

🧠 Roko's Pro Tip on where to actually focus in the chip war.

🗳️ Monday Poll on whether CUDA's moat is finally cracking.

Let’s dive in. No floaties needed…

Goodies delivered straight into your inbox.

Get the chance to peek inside founders and leaders’ brains and see how they think about going from zero to 1 and beyond.

Join thousands of weekly readers at Google, OpenAI, Stripe, TikTok, Sequoia, and more.

Check all the tools and more here, and outperform the competition.

*This is sponsored content

The Laboratory

TL;DR

DeepSeek's Huawei pivot is a milestone: V4 will run entirely on Huawei's Ascend chips, with Alibaba, ByteDance, and Tencent placing bulk orders totaling hundreds of thousands of units. First time a frontier lab chose a non-NVIDIA stack.

CUDA's moat faces its first real test: DeepSeek rewrote its stack for Huawei's CANN framework. If V4 performs competitively, it validates a non-CUDA path for frontier AI, something two decades of NVIDIA lock-in made look irrational.

NVIDIA's China revenue is gone, but the real risk is strategic: China dropped from 13% of revenue to near zero, yet FY2026 still hit $215B. The financial hit is absorbable. A maturing alternative ecosystem that erodes switching costs over time is not.

Self-reliance has a manufacturing ceiling: SMIC's 7nm yields run ~20%, advanced packaging comparable to TSMC is out of reach, and HBM is still dominated by SK Hynix and Samsung. A Trump official also told Reuters DeepSeek may have trained V4 on smuggled Blackwell chips.

Partial success still shifts the trajectory: Even if V4 underperforms, ongoing investment in Huawei infrastructure builds momentum that compounds. If it competes, China's case for compute independence becomes very hard to dismiss.

How computing is splitting the future of intelligence

Today, artificial intelligence is looked upon as a technological shift that could change the course of human history. AI models are being prompted vicariously, and the pitch is simple: the ability of machines to perform complex cognitive tasks will fast-track human cognitive abilities, redefining what was thought possible just a few years ago. However, this was not always the case.

The GPU inflection point

For decades, neural networks remained more theory than practice because training them on CPUs was painfully slow and commercially impractical. All this changed in 2012 when researchers used GPUs to train AlexNet, proving that massively parallel computing could unlock deep learning at scale.

Originally built for graphics rendering, GPUs proved perfectly suited to the matrix-heavy workloads of AI, accelerating training times from weeks to days or hours, triggering a rapid shift across the industry. Within a few years, AI development reorganized around GPU clusters, and by the time large language models arrived, high-end GPUs had become the foundational infrastructure powering modern artificial intelligence.

Today, the importance of GPUs can be understood by looking at the value the international investors and AI industry place on NVIDIA, one of the most successful GPU makers, and the attempts by consecutive U.S. administrations to ban the sale of high-end GPUs to China to make sure the West does not fall behind China in the AI race.

Compute becomes strategy

Of these two developments, the latter stands out as the flashpoint of the global AI race, where the U.S. and China are competing to control not just AI but also the underlying infrastructure that enables it.

In this context, the results of export restrictions on China have been mixed and, in a sense, may have spurred the development of AI infrastructure into two distinct categories: one dominated by Western powers and the other rapidly taking shape within China. While in the U.S., companies like OpenAI, Anthropic, Google, and Microsoft control the LLM narrative, and NVIDIA dominates the chip side of the business, in China, AI labs like DeepSeek are making waves, and now the country is rapidly moving to ensure its infrastructure needs are no longer dependent on foreign entities controlled by competing governments.

DeepSeek’s efficiency play

To understand how these distinct ecosystems are evolving, one needs to take a closer look at DeepSeek. The Hangzhou-based startup DeepSeek, backed by High-Flyer, shook global markets in early 2025 by releasing AI models that rival top American systems despite limited access to advanced chips. Its rapid adoption, with tens of millions of downloads, reflects not just performance but a different philosophy focused on maximizing efficiency through smarter design. By using techniques like memory-saving attention and Mixture-of-Experts architectures, DeepSeek has shown that better software can offset weaker hardware, challenging the assumption that cutting-edge chips alone determine AI leadership.

The company, however, did not stop there. On 3 April 2026, The Information reported that DeepSeek’s upcoming V4 model would run entirely on Huawei’s latest Ascend chips. Reuters confirmed the reporting, adding that Chinese tech giants Alibaba, ByteDance, and Tencent had placed bulk orders for Huawei chips totaling hundreds of thousands of units in preparation for V4’s launch.

Additionally, DeepSeek had spent months working with Huawei and Cambricon Technologies to rewrite pieces of the model’s underlying code and conduct testing. A 25 February 2026 report further revealed that DeepSeek broke industry norms by denying NVIDIA and AMD early access to its V4 model. Instead, it gave Chinese chipmakers like Huawei a head start, signaling a shift in how AI firms align with hardware partners.

Challenging NVIDIA’s stack

For the U.S. chipmakers that have dominated the AI space, this could be increased competition and the possibility of losing their dominance.

To begin with, for NVIDIA, the immediate financial exposure is significant but not existential. China contributed 13% of total revenue ($17B at fiscal year 2025 levels), per NVIDIA’s 10-K filing, and that share had effectively dropped to zero by February 2026. The company’s overall business remains enormous: fiscal year 2026 revenue reached $215B, up 65% year over year, driven by insatiable demand from U.S. hyperscalers. Losing China hurts, but it does not threaten NVIDIA’s core business in the near term.

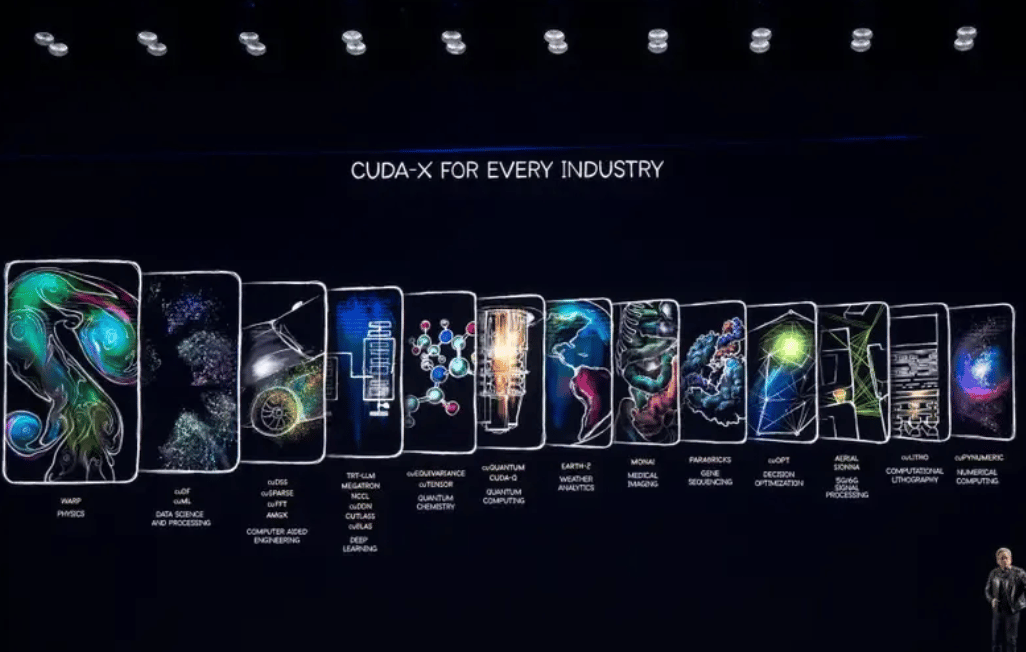

But despite the massive growth, the deeper concern for the U.S.-based chipmaker is strategic. NVIDIA’s dominance rests on two pillars: hardware performance and CUDA, the software ecosystem that locks developers into NVIDIA’s chips. CUDA has been the industry’s default programming platform for nearly two decades, and its library of optimized tools, debugging utilities, and community support creates switching costs that have historically made it irrational for developers to use anything else.

DeepSeek’s decision to rewrite its model stack for Huawei’s CANN (Compute Architecture for Neural Networks), Huawei’s answer to CUDA, is, at the software level, an act of decoupling. If V4 performs competitively on CANN, it validates a non-CUDA path for frontier AI development for the first time.

Huawei has also been working to lower the migration barrier. It open-sourced CANN in August 2025, and the latest version, CANN Next, introduced CUDA-compatible programming abstractions. But CUDA’s head start is measured in decades, not months, and the gap in third-party library support, debugging tools, and community resources remains wide. For developers outside China, there is currently no compelling reason to switch. The risk for NVIDIA is that this calculus changes over time as CANN matures and a growing Chinese developer base provides the feedback loops needed to improve it.

For other U.S. chipmakers like AMD, the impact is lower but meaningful. The company reported $390M in MI308 chip sales in its most recent quarter, per Reuters, and uncertainty around export licenses complicates its outlook for China.

However, there is still time, and not everyone reads DeepSeek’s move as a watershed.

Ben Bajarin, CEO of research firm Creative Strategies, told Reuters that the impact on NVIDIA and AMD is “minimal” because most enterprise customers globally do not run DeepSeek, which serves more as a benchmarking model. He added that new AI coding tools are compressing the optimization timeline, meaning that withholding early access may matter less than it once did.

The limits of self-reliance

Manufacturing constraints impose a hard ceiling on China’s ambitions. SMIC’s yield rates for large AI chip dies run roughly 20% on its 7nm process, meaning four out of five chips coming off the production line fail to meet specifications.

Without access to ASML’s extreme ultraviolet lithography equipment, SMIC relies on older manufacturing techniques that constrain both scale and cost-efficiency. Advanced packaging technologies comparable to those used by TSMC remain out of reach. And high-bandwidth memory production, a critical component for AI accelerators, is dominated by South Korean suppliers SK Hynix and Samsung, leaving China dependent on imports.

There is also an unresolved question about V4’s training hardware. A senior Trump administration official told Reuters in February 2026 that DeepSeek trained V4 on smuggled NVIDIA Blackwell chips at a data center in Inner Mongolia and may have attempted to strip technical indicators of American chip usage before publicly claiming it used Huawei hardware.

If accurate, V4’s performance would partly reflect NVIDIA’s training capabilities rather than a fully domestic stack. CNBC noted in its coverage of Cambricon that the technology of China’s chip companies “remains far behind that of NVIDIA’s” despite rapid revenue growth.

V4’s upcoming release from DeepSeek will test whether AI models built on domestic chips can compete with Western systems; if they do, it strengthens China’s push for self-sufficiency, and if they don’t, it exposes a critical gap. Even partial success matters, because ongoing investment in Huawei-based infrastructure creates momentum that becomes harder to reverse over time, a shift even Jensen Huang has acknowledged.

In the end, the story circles back to the same constraint that once held neural networks back: compute. GPUs did not just accelerate artificial intelligence; they made it viable, turning abstract architectures into systems that could learn, scale, and reshape entire industries. That underlying reality has not changed. What is changing is how that compute is accessed, optimized, and controlled. As companies like DeepSeek experiment with software efficiency and alternatives to NVIDIA’s CUDA stack, and as Huawei pushes to build a domestic hardware ecosystem, the AI center of gravity may begin to fragment. But even in a world of competing stacks and geopolitical fault lines, the importance of high-performance computing does not diminish; it deepens. Whether it runs on NVIDIA GPUs or their emerging rivals, the future of AI will still be written in silicon, shaped by whoever can most effectively turn raw compute into intelligence at scale.

Roko Pro Tip

| 💡The chip you can’t buy shapes the model you can’t ignore. Watch CANN’s maturity curve, not the benchmark leaderboard. |

2026 Salary Report: U.S. vs Global hiring.

Want to know what world-class talent actually costs in 2026?

Athyna's Salary Report breaks down real salary data across AI, Tech, Data, Design, and more—so you can see exactly where the savings are.

The numbers might surprise you.

*This is sponsored content

Monday Poll

🗳️ DeepSeek's V4 on Huawei chips: what's the real outcome? |

Bite-Sized Brains

$240M log splitter: Monarch Tractor burned through a quarter billion dollars on AI farm machines that hit vines, scared off users, and ended up being used to split wood.

Western open source's best shot: 26-person startup Arcee built a 400B-parameter open source model on $20M as the sovereign alternative to Chinese models and big-lab lock-in.

Anthropic's too-dangerous model: Claude Mythos is too risky to release publicly, so Anthropic is giving early access to Amazon, Apple, Google, and Microsoft to build defenses before attackers catch up.

Meme Of The Day

The Toolkit

Synthesise AI: AI-native data platform that turns messy business data into clean customer, revenue, and product foundations for analytics and AI.

Runway: Generative video studio for creators and teams to script, edit, and produce high-quality AI video directly in the browser.

Tabnine: IDE-first AI coding assistant that autocompletes, generates, and refactors code with strong privacy controls for dev teams.

Rate This Edition

What did you think of today's email? |