- Roko's Basilisk

- Posts

- Who Controls Military AI

Who Controls Military AI

Plus: data center emissions, cryonics tests, and art schools go AI.

Here’s what’s on our plate today:

🧪 Anthropic, the Pentagon, and the fight over AI war red lines.

🗞️ AI’s climate bill, frozen brains, and art schools split.

🧠 Roko’s Pro Tip on the difference between law, contracts, and vibes.

📊 Monday poll: Who should set the limits on military AI?

Let’s dive in. No floaties needed…

Goodies delivered straight into your inbox.

Get the chance to peek inside founders and leaders’ brains and see how they think about going from zero to 1 and beyond.

Join thousands of weekly readers at Google, OpenAI, Stripe, TikTok, Sequoia, and more.

Check all the tools and more here, and outperform the competition.

*This is sponsored content

The Laboratory

TL;DR

A $200M contract became a constitutional fight: Anthropic won a major Pentagon deal, but refused to allow Claude to be used for fully autonomous lethal weapons or domestic mass surveillance, triggering a federal backlash.

The courts moved quickly: Judge Rita Lin blocked the government’s ban, suggesting it likely violated free speech, due process, and the limits of procurement power.

The industry and market reacted fast: Employees, civil liberties groups, and even rival tech figures backed Anthropic, while consumer sentiment shifted sharply from ChatGPT toward Claude.

The business barely blinked: Despite canceled deals and government pressure, Anthropic’s revenue and valuation kept climbing, suggesting the fight may have strengthened its brand.

The deeper issue remains unresolved: The real question is not just who won this case, but who should set the limits on AI in warfare: governments, private labs, or laws that do not yet exist.

The $200M question: Who owns AI in warfare?

The relationship between America’s technology industry and its military predates Silicon Valley itself. Going back as far as the 1950s, the Pentagon’s Advanced Research Projects Agency funded projects that would later develop the internet, GPS, voice recognition, and, eventually, the search engine that became Google.

This relationship became even more profound as technology began to play an important role in the defense apparatus, serving as a mutually beneficial system largely invisible to the public.

However, despite the mutual benefit, the arrangement was wrought with tension, especially when it came to impactful technologies like artificial intelligence.

Going back as far as 2018, before Anthropic or OpenAI were household names, roughly 3,100 Google employees signed a letter protesting Project Maven. This Pentagon program used AI to analyze drone surveillance footage. At the time, Google dropped the contract and adopted a set of AI principles that explicitly listed weapons and surveillance systems as applications that the company would not pursue. But the stand did not last, and in February 2025, Google quietly removed that pledge from its website. The change in Google’s stance was not a one-off incident, and soon Anthropic would be embroiled in a similar tussle, this time much more public and involving the sitting U.S. administration.

Red lines vs contracts

In July 2025, Anthropic signed a $200M contract with the Pentagon, making its AI model Claude the first frontier system deployed on the Department of Defense’s classified networks.

For the Pentagon, this was a significant step toward integrating advanced AI across military operations, and for Anthropic, it was the largest government deal in the company’s history.

However, the two could not see eye to eye on the terms of Claude’s deployment. On one end, the DOD wanted Anthropic to make its models available for ‘all lawful purposes,’ on the other hand, Anthropic wanted two contractual restrictions: no use in fully autonomous lethal weapons (where a system selects and engages targets without human intervention) and no domestic mass surveillance.

Into the courts

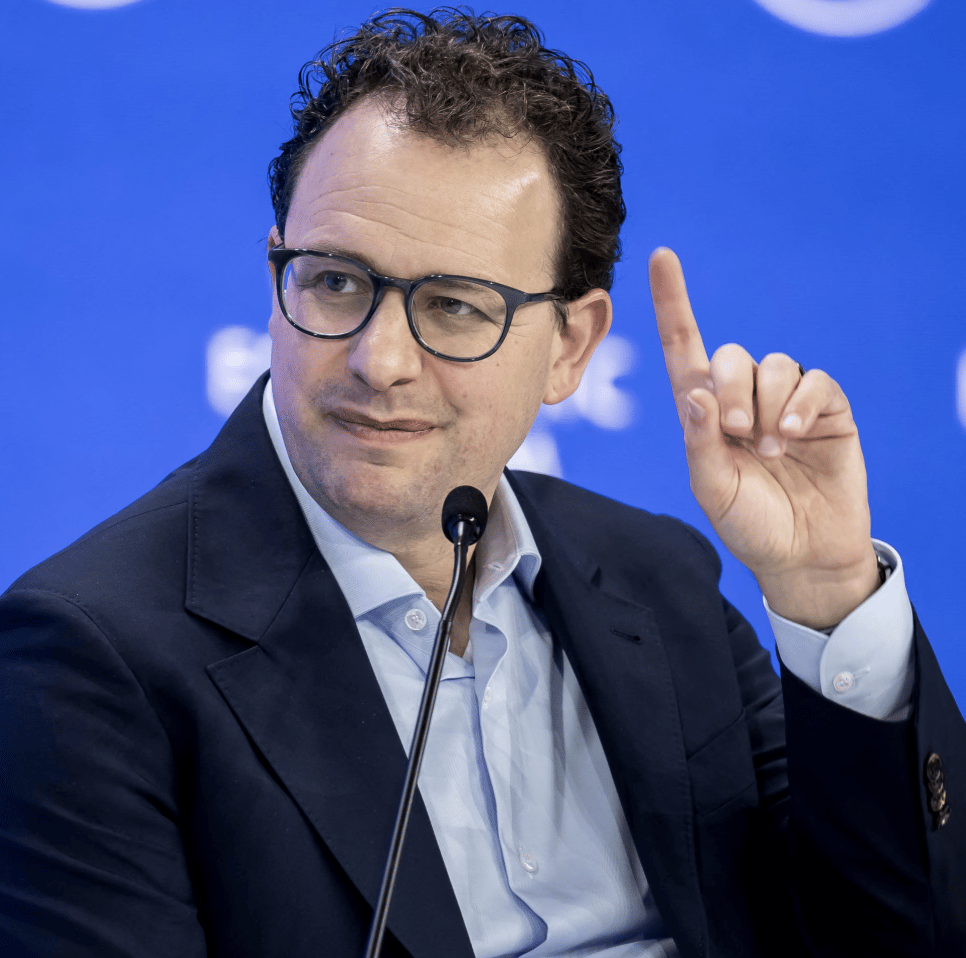

The disagreement didn’t stop there. After Anthropic CEO Dario Amodei warned that advanced AI systems are too unpredictable for autonomous lethal decisions, Pentagon CTO Emil Michael pushed back, publicly urging the company to embrace military use of AI.

This was followed by Defense Secretary Pete Hegseth setting a deadline for Amodei: agree to unrestricted use or face consequences. And when Anthropic refused to budge, President Trump ordered federal agencies to stop using Anthropic’s products. Later, Hegseth designated the company a supply chain risk to national security, a statute originally designed to address threats from foreign adversaries.

Anthropic responded by suing more than a dozen federal agencies and 18 officials, culminating in U.S. District Judge Rita Lin calling the government’s actions “troubling” and questioning its national security rationale.

Two days later, she issued a preliminary injunction blocking the ban, ruling that it was likely a violation of the First Amendment, denied due process, and exceeded statutory authority.

The order is paused for seven days to allow an appeal, and Pentagon CTO Emil Michael has indicated the government will push forward, leaving the case unresolved.

Meanwhile, the dispute has sparked a broader shift in the AI industry. Nearly 900 employees at Google and OpenAI signed an open letter urging their companies to adopt similar limits. When Anthropic filed suit, employees from both firms, including Google’s Jeff Dean, backed it in court. Microsoft also filed a supporting brief, joined by retired military officials and groups such as the EFF and the Cato Institute.

Market backlash

Even consumer response was immediate: ChatGPT uninstalls in the U.S. jumped 295% the day after OpenAI’s Pentagon deal, while one-star reviews surged 775%. A #QuitGPT campaign claimed that over 1.5M users pledged to delete the app. At the same time, Claude’s U.S. downloads rose sharply, pushing it to the top of the App Store.

On the enterprise side, the impact was more mixed. Over 100 Anthropic customers expressed concern after the designation, with the company estimating billions in potential 2026 losses and $180M in deals already collapsing.

Yet commercially, Anthropic continued to grow. Its annualized revenue hit $19B in March 2026, up from $9B at the end of 2025, alongside a $15B funding round valuing it at $380B, suggesting the controversy strengthened its position rather than weakened it.

More than a contract

What the Anthropic-Pentagon dispute represents is more than just a $200M contract; it is about who gets to decide the boundaries of AI use in warfare, and whether those boundaries can survive contact with the world’s largest military customer.

The Pentagon’s position is grounded in existing policy, which already requires human oversight in autonomous weapons. From its perspective, Anthropic’s restrictions are redundant and risk giving private companies undue control over how the military uses tools it has paid for. There is also a strategic concern: if U.S. firms impose limits while Chinese companies do not, it could create a competitive disadvantage for U.S. firms.

Anthropic, however, argues that current laws leave critical gaps, particularly around surveillance, making contractual safeguards more meaningful than regulation alone. Others push the debate further, questioning whether private companies, rather than elected governments, should be drawing these lines in the first place.

Judge Rita Lin’s ruling adds a constitutional dimension. If it holds, it would establish that the government cannot use its procurement power to penalize companies for publicly disagreeing with defense policy. This precedent could ripple across the tech industry.

Reasking an old question

The history of warfare is, in large part, a history of technology: the longbow, the telegraph, radar, nuclear fission, and GPS. Each forced society to answer the same question: who controls how a powerful new tool is used in conflict, and what limits apply? Those answers have never come easily or quickly, and they have never come solely from the technology sector.

What makes AI different is the speed and the stakes. The gap between a research breakthrough and a deployed military capability is shrinking from decades to months. The companies building these systems understand their limitations in ways that procurement offices and congressional committees often do not. At the same time, those companies are private actors with commercial incentives that do not always align with public accountability.

The Anthropic-Pentagon dispute will eventually produce a legal outcome, a ruling that either upholds or overturns Judge Lin’s injunction, and eventually a legislative framework that either codifies or ignores the questions the case has raised. But the deeper question will outlast any single court decision. It is whether the institutions that govern the use of force can adapt fast enough to ensure that the most consequential technology of this century remains, in the ways that matter most, under human control.

Roko Pro Tip

| 💡If your AI vendor says ‘human oversight is already policy,’ ask whether that protection lives in law, in contract language, or in vibes. Those are not the same thing. |

The AI Talent Bottleneck Ends Here

If you're building applied AI, the hard part is rarely the first prototype. You need engineers who can design and deploy models that hold up in production, then keep improving them once they're live.

Deep learning and LLM expertise

Production deployment experience

40–60% cost savings

This is the kind of talent you get with Athyna Intelligence—vetted LATAM PhDs and Masters working in U.S.-aligned time zones.

*This is sponsored content

Monday Poll

🗳️ Who should set the limits on military AI use? |

Bite-Sized Brains

AI climate bill grows: AP reports the AI data center boom is pushing Big Tech further off climate targets, with power demand rising so fast that more natural gas is filling the gap.

Frozen brain, still dead: A cryobiologist thawed and sampled fragments of a friend’s brain after a decade in cryogenic storage, saying the tissue held up surprisingly well even though revival remains science fiction.

Art schools split: The Verge says creative schools are now telling students to learn generative AI or risk falling behind, even as many faculty and students deeply resent the shift.

Meme of the Day

The Toolkit

Mirage: Browser-based 3D design app that lets you prototype interactive product shots and visuals directly in your editor.

Speechmatics: Speech recognition engine for real-time, multilingual transcription and voice analytics across audio and video.

Superhuman: Rebranded Grammarly platform offering an AI assistant that drafts, edits, and manages communication across email and work apps.

Rate This Edition

What did you think of today's email? |